PERSPECTIVE A Health Rights Impact Assessment Guide for Artificial Intelligence Projects

Volume 22/2, December 2020, pp 55 -62

Carmel Williams

Introduction

Artificial intelligence (AI) is being hailed by various actors, including United Nations agencies, as having the potential to alleviate poverty, reduce inequalities, and help attain the Sustainable Development Goals (SDGs).[1] Many AI projects are promoted as making important contributions to health care and to reducing global and national health inequities.

However, one of the risks of AI-driven health projects is that they can be singularly focused on one health problem and implemented to resolve that one problem, without consideration of how a whole health system is needed to enable any one “solution” to function in both the short and long term. Health projects that have not been designed in participation with local people have a history of failing, and externally funded development projects are especially vulnerable. In terms of human rights, such failings can be attributed to a lack of participation, an imbalance of power, and failure to observe the critically important role of key institutions such as the health system in fulfilling people’s health rights.

Health projects that fail can have negative consequences beyond their own failed missions, and they risk harming human rights generally and the right to health specifically. Equitable access to quality health care is dependent on a well-functioning health system; and such a system is regarded as the core institution through which the right to health can be fulfilled.[2] If a new project weakens the health system, perhaps by attracting a disproportionate number of health workers to it, or overloading diagnostic or supply chain services, or drawing finances away from other core services, then it is negatively affecting state obligations to fulfill the right to health. These risks may be greatest where health systems are weakest—usually in low- and middle-income countries.

This perspective argues that the way to mitigate these risks is to conduct a health rights impact assessment prior to their implementation. It introduces a tool that enables a systematic process of health rights assessment to take place.

Background: WHO guideline

In 2019, the World Health Organization (WHO) introduced a guideline on how to use digital technology to strengthen health systems.[3] The guideline provides useful indicators for assessing some of the impacts of AI on health systems, but it fails to locate the centrality of health systems to the fulfilment of the right to health.

The guideline followed a resolution brought to the World Health Assembly in 2018 that recognized the value of digital technologies (including AI) and their capacity to advance universal health coverage and the SDGs.[4] However, the guideline concedes that enthusiasm for digital health has seen many short-lived implementations, an overwhelming diversity of digital tools, and a limited understanding of their impact on health systems and people’s well-being.[5] It stresses the need to evaluate the positive and negative impacts of proposed digital health technologies and to ensure that such investments do not inappropriately divert resources from alternative, nondigital approaches and thereby increase health inequities.[6]

The guideline advises that digital health technologies should complement and enhance health system functions, rather than replace the fundamental components needed by health systems, such as the health workforce, financing, leadership and governance, and access to essential medicines.[7] It calls for an assessment of the health system’s ability to absorb digital interventions and warns that new technology must not jeopardize the provision of quality nondigital services in places where digital technologies cannot be deployed. It demonstrates the assessment of various applications of health-related technology based on effectiveness, acceptability, feasibility, resource use, and “gender, equity and human rights.” The guideline encourages technology developers to work with users and to think broadly about context both within and beyond the health system, as well as to consider whether a given digital health intervention will improve universal health coverage. Although human rights are included with the “gender, equity and human rights” component for impact analysis, the specific indicator selected to assess this component is limited to the technology’s impact on equity.[8]

But equity—important as it may be—is only one human rights consideration. It is also necessary to examine other key principles of the right to health when assessing health interventions.[9] Although the WHO guideline examines various components of a health system when the component is directly affected by the technology, it fails to systematically examine the whole health system to identify any less obvious, indirect impacts of the proposed new technology.

In response, this paper presents an expanded tool to help states and other actors undertake a right to health impact assessment prior to implementing AI projects. The tool, informed by the WHO guideline, is a refinement of an earlier impact assessment tool of aid-funded health projects in low-resource settings.[10] It accommodates additional considerations necessary when AI health projects are under development. It explores possible impacts, specifically on the right to health, moving beyond the civil and political rights most frequently associated with digital health, big data, and AI—namely, data privacy and protection, security, and algorithm transparency. It is a guide that provides a sample of the type of questions across the health system that need to be explored¾but each project will need its own context-specific adjustments.

The right to health and health systems

Because the health system is the core institution through which the right to health can be realized, governments and other agencies have a duty to ensure that health systems are enabled to fulfill people’s entitlements to available, accessible, acceptable, and quality health services (AAAQ).[11] Accordingly, governments have a human rights obligation to ensure that health systems are never weakened but rather continually improved as part of their progressive realization duties, as detailed in General Comment 14 of the Committee on Economic, Social and Cultural Rights.[12] One way to prevent a weakening of the health system while demonstrating a commitment to the progressive realization of the right to health is to carry out human rights impact assessments prior to adopting and implementing policies and programs.[13] This applies to projects relating to digital health, projects driven by AI (irrespective of whether they are government or nonstate initiatives), and projects driven by local funding or through international assistance and cooperation.

In order to conduct a health rights impact assessment on a health system, it is convenient to compartmentalize the system to enable impacts to be measured across its many functions. A useful schematic devised by WHO identifies the component parts that contribute to the delivery of health care: health services and facilities; health workers; health financing; medicines, products, and other supplies; health information systems; and management and governance.[14] Importantly, there is more to a health system than these technocratic elements: people and communities must also be included, as the right to health entitles them to participate in a meaningful way in the planning, delivery, and monitoring of health care and health promotion. Human rights-based approaches to health care and health projects promote the active engagement of people who will be using services, as well as the understanding that people are legally entitled to these services as a function of their right to health. Without people’s participation, health services cannot achieve AAAQ for all.

Health rights impact assessment

A health rights impact assessment is a systematic examination of a project, undertaken prior to its implementation, to anticipate the effect that it will have on human rights and health, including and extending beyond its own project-related goals. It should not be confused with, nor replaced by, a needs assessment, which is a narrower exercise that does not assess risks. A health rights impact assessment predicts immediate and longer-term impacts on the whole health system by examining each of the system’s component parts and assessing the ways in which the project could strengthen or weaken that component. If risks are identified, an impact assessment considers ways to mitigate them. The purpose of such an assessment is at least twofold: it aims to strengthen the project by ensuring that it is in alignment with the health system and its governing strategies and plans; and it aims to strengthen the health system by helping design projects that will be sustainable and contribute to the protection and fulfilment of health rights.[15]

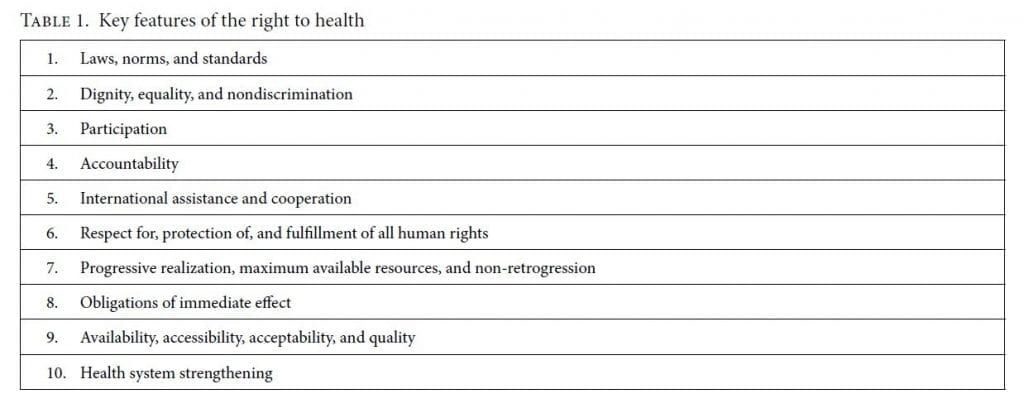

Conducting a health rights impact assessment when a project is being designed can help governments adopt and implement policies, programs, and projects that will best meet their obligations to take deliberate and concrete steps toward the progressive realization of human rights.[16] It serves a further purpose as well, by promoting engagement with the key features of the right to health, outlined in Table 1.[17]

AI for health care in low-resource settings

Even before the extraordinary pressures of the COVID-19 pandemic, health systems worldwide were facing challenges, including greater demands for services with the rising burden of disease, increasing costs, and poor productivity and overstretched human resources. It would therefore be of great benefit to health systems and communities if technological advances could help reduce burdens on systems and health care costs while increasing accessibility and equity.[18] In an opening address to the “AI for Good Summit” in 2019, the secretary-general of the International Telecommunications Union, Houlin Zhao, urged the audience to “turn [the] data revolution into a development revolution.”[19] To achieve this revolution, though, it is imperative that the development context is fully understood and reflected in the data solutions. Development has a long history of failed projects, especially those dependent on technology.[20] Enthusiastic donors can be persuasive partners when seeking to test new technologies in low-resource settings, and governments in these settings are presently indicating that they are “open for business” when it comes to AI partnerships.[21] Not only are the well-known pitfalls arising from a lack of ongoing technical or health worker support, or funding for maintenance, present with AI-based technology partnerships, but additional traps as yet unknown can arise from downstream data ownership, sharing, and reuse.[22]

AI is being used in health care in various ways, including in diagnosis (especially imaging), patient management, treatment (for example, robotics in surgery), and new drug development.[23] In the wake of COVID-19, technology is also playing a large part in contact tracing, where it monitors the spread of the epidemic, and in the race to develop a vaccine.[24] But many of these uses demand a level of technical capacity well beyond that available to health systems in low-resource settings. Even if the technology is designed elsewhere and imported, its ongoing use requires an adequate, well-trained, and available workforce; infrastructure (including, at the very least, electricity and internet); and accessible health facilities so that the benefits of such advances are equitably available to all people. Designing data-driven technological projects for health care in low-resource settings requires a detailed understanding of their challenging contexts; otherwise, the interventions will almost certainly be inappropriate or unsustainable. It is difficult to acquire such an understanding from afar. But even locally developed AI-based technological solutions can fail to respect and protect human rights if they are not supporting the local health system in meeting the health rights of the people in its jurisdiction.[25]

Thus, regardless of whether AI health projects are being introduced in a development context (a focus of AI for Good) or in high-income countries, it is imperative that systematic health rights impact assessments are undertaken and that they are broad enough to anticipate impacts on the health system components, as well as on civil and political rights relating to data privacy, ownership, and security.

Adapting a health rights impact assessment tool for technology projects

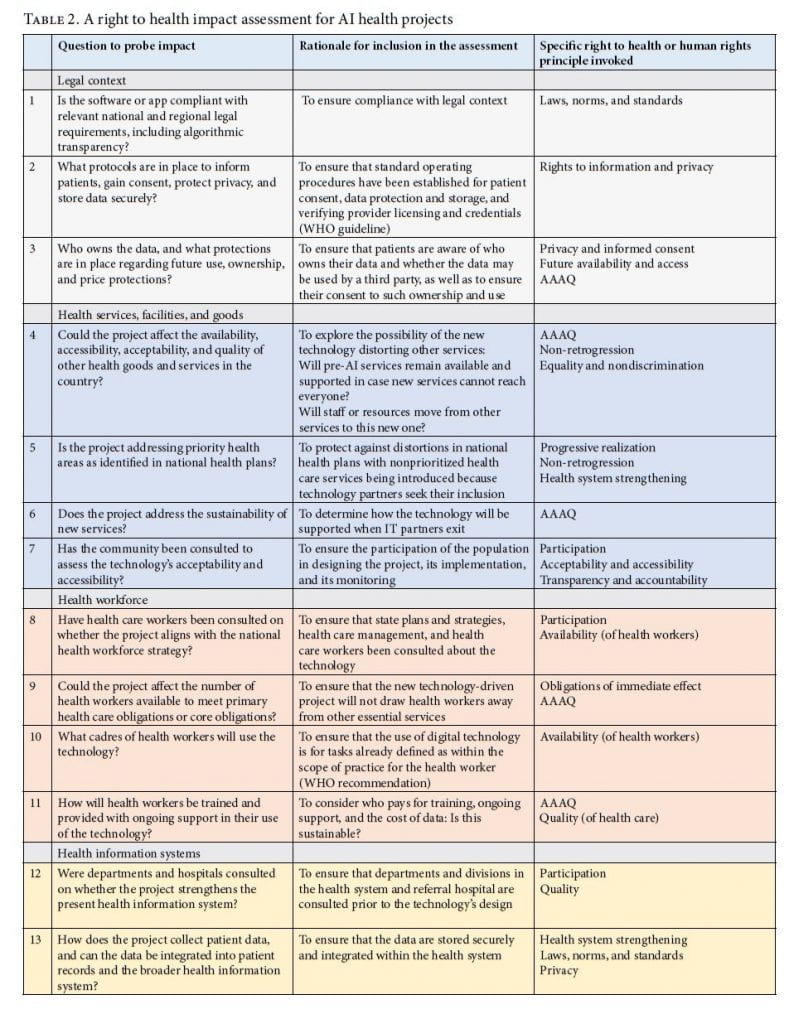

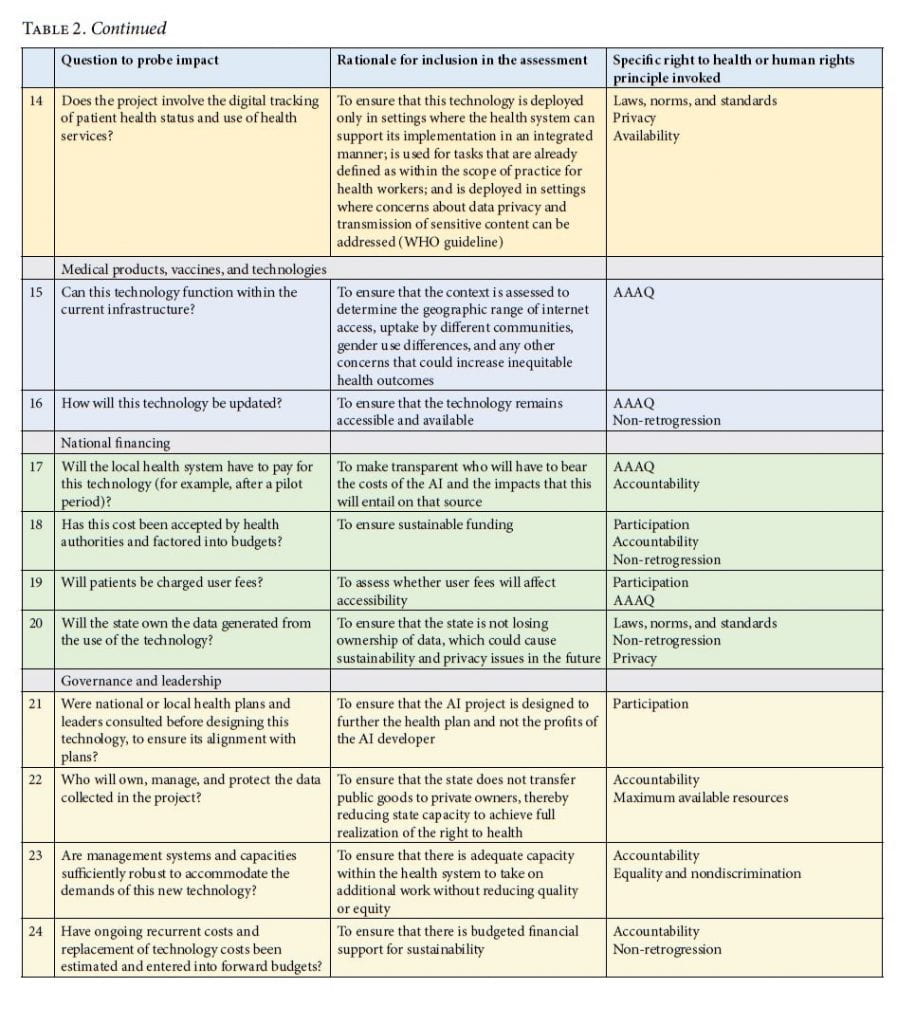

Presented in Table 2, this assessment tool is framed to guide the development of AI for health projects that comply with a right to health framework in local contexts. Each of the proposed questions is linked to at least one of the key features of the right to health. Therefore, indicators are selected to assess impact on the health system and on the right to health.

Discussion

This perspective has presented a rationale for undertaking a right to health impact assessment before implementing AI health projects in high- or low-resource settings. Such assessments are in keeping with the United Nations’ draft business and human rights instrument to regulate the activities of businesses and transnational corporations.[26] The tool in Table 2 demonstrates the range of questions that need to be addressed before implementing AI projects to determine how a new technology might affect the health system and, therefore, the right to health.

Introducing an app that can, for example, diagnose skin cancer or detect a pregnant person’s increased risk of pre-term birth, does nothing to fulfill people’s right to health entitlements, or universal health coverage, if there are no suitable treatments available for skin cancer or secondary-level obstetric services accessible to those who need them. Every component of the health system must be functioning well before a service can become equitably available, accessible, acceptable, and of good quality; and if these and other right to health features are not achieved, people’s rights cannot be fulfilled. This tool includes questions that not only probe the technocratic aspects of the health system—even though they are crucially important—but also assess other key right to health principles, including participation, accountability, equality and nondiscrimination, non-retrogression, and international cooperation. It is not enough for developers of a new AI application to claim that their application will address one health service and will therefore “help achieve SDG3 and universal health coverage”; without a right to health impact assessment, there can be no confidence that this is a likely outcome. Similarly, all human rights are interrelated and indivisible, which means that a rights-based app assessment must look beyond the health sector to determine how the technology could also affect other rights, including those related to privacy, confidentiality, and security.

The long-term sustainability of a technology-based business depends not only on states’ and businesses’ fulfillment of their obligations to protect human rights but also on the development of products that service providers find useful, affordable, efficient, and acceptable to rights holders, including men, women, and children. These criteria apply whether the technology is state or nonstate owned and developed.

Funding

This work was supported by the UK’s Economic and Social Research Council (grant number ES/M010236/1).

Carmel Williams, PhD, is a researcher on the Human Rights, Big Data and Technology Project, Human Rights Centre, University of Essex, and Executive Editor of Health and Human Rights Journal.

Please address correspondence to the author. Email: williams@hsph.harvard.edu.

Competing interests: None declared.

Copyright © 2020 Williams. This is an open access article distributed under the terms of the Creative Commons Attribution Non-Commercial License (http://creativecommons.org/licenses/by-nc/4.0/), which permits unrestricted noncommercial use, distribution, and reproduction in any medium, provided the original author and source are credited.

References

[1] See, for example, International Telecommunications Union, AI for Good Global Summit 2020: Accelerating the UN Sustainable Development Goals. Available at https://aiforgood.itu.int/#:~:text=The%20AI%20for%20Good%20Global,Sister%20Agencies%2C%20Switzerland%20and%20ACM.

[2] P. Hunt and G. Backman, “Health systems and the right to the highest attainable standard of health,” Health and Human Rights Journal 10/1 (2008), pp. 81–92; G. Backman, P. Hunt, R. Khosla, et al., “Health systems and the right to health: An assessment of 194 countries,” Lancet 372/9655 (2008), pp. 2047–2085.

[3] World Health Organization, WHO guideline: Recommendations on digital interventions for health system strengthening (Geneva: World Health Organization, 2019), p. ix.

[4] World Health Assembly, Resolution 71.7, A71/A/CONF./1 (2018).

[5] World Health Organization (2019, see note 3), p. ix.

[6] Ibid., p. ix.

[7] Ibid., p. xi.

[8] Ibid., p. 28.

[9] C. Williams, A. Blaiklock, and P. Hunt. “The right to health supports global public health,” in Oxford global public health textbook, 7th edition (Oxford: Oxford University Press, forthcoming).

[10] C. Williams and G. Brian, “Using health rights to improve programme design: A Papua New Guinea case study,” International Journal of Health Planning and Management 27 (2012), pp. 246–256; C. Williams and G. Brian, “Using a rights-based approach to avoid harming health systems: A case study from Papua New Guinea,” Journal of Human Rights Practice 5/1 (2013), pp. 177–194.

[11] Committee on Economic, Social and Cultural Rights (CESCR), General Comment No. 14, The Right to the Highest Attainable Standard of Health, UN Doc. E/C12/200/4 (2000).`

[12] Ibid.

[13] G. MacNaughton and P. Hunt, “Health impact assessment: The contribution of the right to the highest attainable standard of health,” Public Health 123 (2009), pp. 302–305.

[14] World Health Organization, Everybody’s business: Strengthening health systems to improve health outcomes (Geneva: World Health Organization, 2007).

[15] Williams and Brian (2012, see note 10); Williams and Brian (2013, see note 10).

[16] P. Hunt and G. MacNaughton, Impact assessments, poverty and human rights: A case study using the right to the highest attainable standard of health (Geneva: UNESCO, 2006).

[17] Williams et al. (see note 9).

[18] T. Panch, P. Szolovits, and R. Atun, “Artificial intelligence, machine learning and health systems,” Journal of Global Health 8/2 (2018).

[19] C. Williams, “AI for good, but good for all?,” Human Rights, Big Data and Technology Project blog (June 18, 2019). Available at https://www.hrbdt.ac.uk/ai-for-good-but-good-for-all.

[20] W. Easterly, The white man’s burden: Why the West’s efforts to aid the rest have done so much ill and so little good (Oxford: Penguin, 2006).

[21] Williams (2019, see note 19).

[22] C. Williams, “Growing inequality and risks to social rights in our new data economy,” in G. MacNaughton and D. Frey (eds), Human rights and economic inequalities (Cambridge: Cambridge University Press, forthcoming).

[23] R. C. Mayo and J. Leung, “Artificial intelligence and deep learning: Radiology’s next frontier?,” Clinical Imaging 49 (2018), pp. 87–88; D. L. Labovitz, L. Shafner, Gil M. Reyes, et al., “Using artificial intelligence to reduce the risk of nonadherence in patients on anticoagulation therapy,” Stroke 48/5 (2017), pp. 1416–1419; L. Cosgrove, J. M. Karter, M. McGinley, and Z. Morrill, “Digital phenotyping and digital psychotropic drugs: Mental health surveillance tools that threaten human rights,” Health and Human Rights Journal 22/2 (2020).

[24] C. Williams, “AI’s role in COVID-19 poses human rights risks beyond privacy,” Human Rights, Big Data and Technology Project blog (September 1, 2020). Available at https://www.hrbdt.ac.uk/ais-role-in-covid-19-poses-human-rights-risks-beyond-privacy.

[25] Human Rights Council, Report of the Special Representative of the Secretary-General on the Issue of Human Rights and Transnational Corporations and Other Business Enterprises, John Ruggie, UN Doc. A/HRC/17/31 (2011).

[26] Office of the United Nations High Commissioner for Human Rights, OEIGWG chairmanship revised draft 16.7.2019: Legally binding instrument to regulate, in international human rights law, the activities of transnational corporations and other business enterprises. Available at https://www.ohchr.org/Documents/HRBodies/HRCouncil/WGTransCorp/OEIGWG_RevisedDraft_LBI.pdf.